The Crucial Importance of Data Engineering

October 14, 2021

Data Engineering vs Data Science

The terms “Data Science” and “Data Engineering” are often used interchangeably. Years ago, Data Science was the term that encompassed most of the data related activities that were growing in importance. Everyone needed Data Scientists (and still do!) and there weren’t enough to go around (still true!).

Wearing different hats

But as we typically see in technology, it became clear that there were a lot of technical skills required to wrestle data into something useful for the business. And the people who could do statistical analysis were not necessarily the same people that could build the infrastructure and data architecture needed for that analysis.

For example, most of us have had the experience of putting in hours to create a report. Perhaps it is a financial report. We gather the information from various sources, check it, clean it, organize it, and finally have it in front of us. Now we have to switch mindsets and look at the data from a new perspective — the perspective of analysis. Even if we have both capabilities, it’s hard to switch roles and see the data anew.

The Critical Role of the Data Engineer

As the importance and amount of data increases in business, it is clear that collecting, storing, compiling, and rationalizing the data is a separate role from data analysis. The former belongs to the Data Engineer, the latter to the Data Scientist.

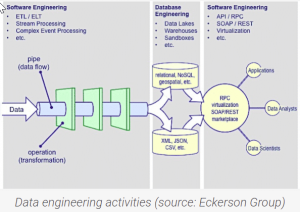

An excellent description of Data Engineering comes from a blog describing the role:

“Engineers design and build things. “Data” engineers design and build pipelines that transform and transport data into a format wherein, by the time it reaches the Data Scientists or other end users, it is in a highly usable state. These pipelines must take data from many disparate sources and collect them into a single warehouse that represents the data uniformly as a single source of truth.”

The following image describes some of the specific tasks and skills of the Data Engineer.

A Picture is Worth a Thousand Words

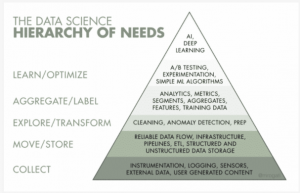

It’s enlightening to have a picture that presents the various skills required for the discipline of Data. The following pyramid was developed by Monica Rogati, an equity partner at Data Collective. It presents a data science hierarchy of needs. Data engineering falls primarily into levels 2 and 3.

A Lot of Tools!

The Data Engineer needs to be conversant in quite a number of tools in order to fulfill her or his obligations. Her key deliverable is a warehouse of data that presents a single source of truth. Automated data pipelines developed by the Data Engineer continually replenish the warehouse. The tools he uses are varied. They are constantly being updated, changed, and created in the ecosystem of Data Engineering tech. These tools include Python, SQL, Snowflake, Matillion, AWS, Azure, Apache Spark, MongoDB, and so on.

SPK recently completed a Data Engineering project for one of our clients. The telecom company had disparate and numerous database silos that contained valuable information needed for better business decision making and strategy. However, it was impossible to collect and relate the data in order to do the analysis required. In a few short months, SPK had created data pipelines and a warehouse that allowed dynamic data analysis and visualization they had been unable to do at all, prior to the project. We’ve created a Case Study describing the effort, and are pleased to share it at this time.

If you are interested in learning how Data Engineering can make your business data accessible and useful, contact us or email us at info@spkaa.com.

Chris McHale

CEO

SPK and Associates

Next Steps:

- Contact SPK and Associates to learn more about our Data Engineering and Analysis services.

- Read our recent Case Study about how we collected, compiled, and rationalized data to make it dashboard-ready.

- Subscribe to our blog to read further about smart engineering technology solutions and development operations topics.