As Senior Systems Integrator for SPK and Associates, a California based IT services company specializing in infrastructure management services, I recently completed an interesting project. The goal was to create an ESX cluster for a small engineering group (about 40 users). The cluster would be used for a wide variety of applications — software repositories, QA testing of linux appliances, databases, and potentially others as well. Other than a gigabit network switch and some PDUs, there was no existing infrastructure in place that I could leverage.

For under $18k in hardware, and $7k in VMWare licensing, we were able to build a 2-node VCenter cluster with full HA support. I expect this to be able to handle upwards of 60 virtual machines, based on typical usage. Here are the hardware specs chosen:

VCenter Hosts:

- Dell PowerEdge R610

- (2) Xeon X5650 Hex-Core CPUs

- 48GB RAM

- Intel X520-T2 10GbE NIC

Storage: We spec’ed out a Dell PowerEdge R510 with built-in DAS. Redhat Enterprise 5 was loaded on the machine, and any extra space on the server would allow it to be used as a general purpose filer. The engineering group had no existing shared storage, so this would be a huge benefit for them.

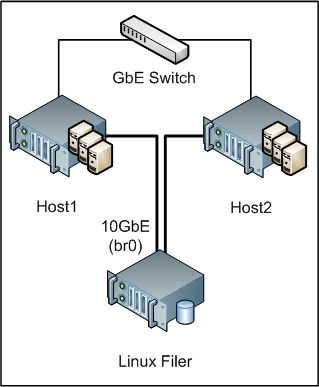

Networking: This is where some creativity was required. As mentioned earlier, there was no existing infrastructure to leverage– no SAN, no filer, only a single gigabit switch. As such, I equipped both VSphere hosts as well as the linux server with an Intel X520-T2 10GbE NIC. This is the copper version of their adapters, and allows regular CAT5e or CAT6 cables to be used. 10GbE allows for the consolidation of both storage traffic and VMotion traffic. VM network traffic is hosted by the onboard gigabit NICs.

Since the Intel X520-T2 NICs are only dual-port, this limits the cluster size to only 2 hosts. Once the group is ready to scale to 3 or more hosts, we simply need to incorporate a 10GbE switch into the mix.

Storage Protocol: NFS vs iSCSI has always been a topic up for debate. The main disadvantage historically with NFS has been performance. However, with the proliferation of 10GbE, this has become less of an issue. NFS as your VM store provides a huge benefit — no longer do you have to carve out large LUNs. VM access is now file based instead of block based, and this allows us to take advantage of such things as LVM and individual file restores (if we were dealing with Netapp Filer snapshots).

In setting up NFS and the storage network, I came across an interesting VMware “bug”. Although the back-end 10GbE storage network mounted the linux server via the same hostname from two separate networks (via /etc/hosts entries), and the VMFS UUIDs were identical, VMotion / host migration failed to work. After working VMWare with this, we discovered that VCenter stores in its database the IP address of the NFS host, regardless of the /etc/hosts entries. As such, I had to create a bridge interface on the linux filer to accommodate this. This allows two or more network interfaces on the machine to be a member of a single VLAN. Essentially, the T520 NIC becomes a 2-port switch.

In the end, with the VMotion issue sorted out, the system has been performing well. If we wanted to reduce costs further, we could have gone with non-Dell hardware, and used ESXi instead of ESX Standard. But this was a good balance between having a relatively low cost setup with basic HA capabilities.

Have any interesting VMWare setups you’d like to mention? I’d be interested to hear about them. Stay tuned to our IT blog to keep abreast of more network infrastructure tips and howtos.

Michael Solinap

Sr. Systems Integrator